dCO: Research Showcase

The Data Center Observatory (DCO) is a centerpiece of Carnegie Mellon’s attack on ever-growing data center operational costs. As a data center, it will provide a computation and storage utility to resource-hungry research activities such as data mining, design simulation, network intrusion detection, and visualization. As an observatory, it will provide invaluable real data to systems researchers seeking to understand the sources of operational costs and to evaluate novel solutions. Combining the two builds on Carnegie Mellon's tradition of actively using and show-casing new computing approaches, even as we invent them, allowing us to push the frontiers and stay at the forefront of technology. Take a virtual tour!

HOW DOES THE DCO HELP?

The DCO enables us to target and attack the real challenges of data centers. First, it allows us to tackle one of the largest IT challenges of our time: data center operational costs, providing us a live environment to analyze, exposing time losses and resource costs. The DCO has been designed with detailed monitoring instrumentation at every level, on power/cooling,on the software systems and on human administration time. Researchers will be able to create detailed breakdowns over the long-term and at particular points in time (e.g., during failure events), as well as drive automated problem detection and diagnosis research. Second, the DCO provides a real environment in which to test reasonably mature new technologies and measure how well they work. Without the DCO, researchers are left with little evidence to corroborate new ideas beyond argument, leading many to work on other problems.

EARLY RESEARCH ACTIVITIES

The DCO enables a broad range of research activities, beyond “simply” measuring and understanding operational costs. A few initial thrusts include:

(1) Adaptive power management. Carnegie Mellon teamed with APC to take advantage of their novel hot air containment approach, achieving energy savings from the beginning. We are also teaming with APC to explore new approaches to dynamically controlling which computers are on and off, based on application demands, saving energy at every level of the system.

(2) Automated storage management. Carnegie Mellon’s Parallel Data Lab (PDL) has been developing new architectures and tools for mitigating storage administration costs, and the Self-* Storage system that they are building will provide the DCO’s storage.

(3) Finger pointing in large-scale systems. Diagnosing problems is a notoriously difficult problem in data center environments, and we are combining the DCO’s detailed instrumentation with on-line and off-line machine learning tools to automate the process of identifying the root causes of problems that arise

SOME DETAILS

- The DCO is located on the main floor of the Collaborative Innovation Center (CIC).

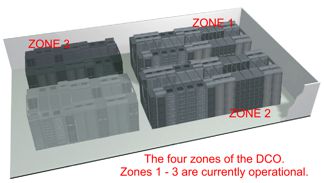

- Construction of the facility was completed in April, 2006, and the first phase of computing equipment went on-line immediately thereafter.

- The second phase of computing equipment in Zone 2 of the DCO came online in October of 2008.

- Zone 3 came online in November 2012.

- The location was chosen to maximize visibility, and has a windowed wall for walk-by tours and an LCD display to continuously tell the research story and show measurement data (thermal graphs, utilizations, etc.).

- The DCO is 2,000 square feet in size, with an adjacent 1,100 square foot lab for administrator cubicles, storage, and unpacking and testing equipment.

- The DCO has been designed, in partnership with APC Corporation, to be fully instrumented and adaptively controlled, allowing measurement of all of the environmental aspects, including power consumption, temperature, humidity, chilled water consumption rate, and fan speed.

- APC In-Row™ cooling and Hot Aisle Containment™ enable remarkable equipment densities; the room has a peak capacity of 40 racks of computing equipment that consume 774kw of power.

- In the event of a loss of power and/or the loss of the campus chilled water supply, the DCO has electrical and mechanical provisions to facilitate a controlled 4-minute shutdown.

- The Grey System, a universal and highly-secure phone-based access control system developed by Carnegie Mellon CyLab researchers, is used to control access to the DCO.

- Fiber optic network connects the DCO to the primary campus backbone.

- As of May 2015 there are 900 computers in the DCO, connected to 48 network switches, 87 power distributors and 20+ remote console servers with a total of 2100+ cables

- There are a total of 7000+ CPU cores, 20+ TiB of memory and 2.0 PB of disk space across 2100+ spindles

- Eight air conditioners within the zones process the hot air back to normal building temperatures.

- 13 sensor nodes monitor environmental conditions

in the room; most equipment can send email to

alert of adverse conditions.

CONTACTS

, PDL Director

(412) 268-1297

, PDL Executive Director

(412) 268-5485

, PDL Business Administrator

(412) 268-6716

Mailing Address:

Carnegie Mellon University

5000 Forbes Avenue

CIC Building, Second Floor

Pittsburgh, PA 15213-3891