ARGON

Performance Insulation for Shared Storage Servers

Services that share a storage infrastructure should realize the same efficiency, within their share of time, as when they have the infrastructure to themselves. Unfortunately, traditional systems offer nothing close to this ideal, with inter-service disk and cache interference creating large and unpredictable inefficiencies. A new metric, the R-value, is an explicitly configured lower bound on the storage efficiency a service will receive (relative to its non-sharing performance), no matter what services it shares the infrastructure with. The Argon storage server explicitly manages its resources to bound the inefficiency arising from inter-service disk and cache interference in traditional systems. The goal is to provide each service with at least a configured fraction of the throughput it achieves when it has the storage server to itself, within its share of the server -- a service allocated 1/nth of a server should get nearly 1/nth (or more) of the throughput it would get alone. The Argon storage server uses prefetching, write coalescing, cache partitioning, and quanta-based disk scheduling policies to ensure R-value commitments are met. For more information, see the extended overview.

|

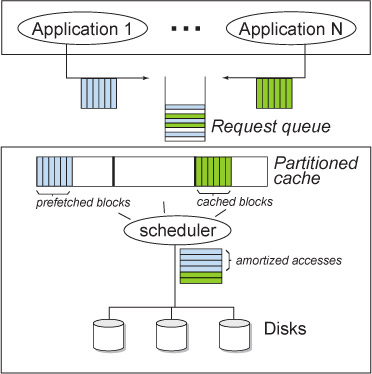

Argon’s high-level architecture. Argon makes use of cache partitioning, request

amortization, and quanta-based disk time scheduling. |

People

FACULTY

Anastassia Ailamaki

Chuck Cranor

Greg Ganger

Garth Gibson

STUDENTS

Matthew Wachs

Elie Krevat

Deepti Chheda

Eno Thereska

Michael Abd-El-Malek

Publications

- Co-scheduling of Disk Head Time in Cluster-based Storage.

Matthew Wachs, Gregory R. Ganger. 28th International Symposium On Reliable Distributed Systems September 27-30, 2009. Niagara Falls, New York, U.S.A. Supersedes Carnegie Mellon University Parallel Data Lab Technical Report

CMU-PDL-08-113.

October 2008.

Abstract / PDF [245K]

- Argon: Performance Insulation for Shared Storage Servers. Matthew Wachs, Michael Abd-El-Malek, Eno Thereska, Gregory R. Ganger. Proceedings of the 5th USENIX Conference on File and Storage Technologies (FAST '07), February 13–16, 2007, San Jose, CA. Supercedes Carnegie Mellon University Parallel Data Lab Technical Report CMU-PDL-06-106, May 2006.

Abstract / PDF [ 167K]

Acknowledgements

We thank the members and companies of the PDL Consortium: Bloomberg LP, Datadog, Google, Intel Corporation, Jane Street, LayerZero Labs, Meta, Microsoft Research, Oracle Corporation, Oracle Cloud Infrastructure, Pure Storage, Salesforce, Samsung Semiconductor Inc., Uber, and Western Digital for their interest, insights, feedback, and support.